The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

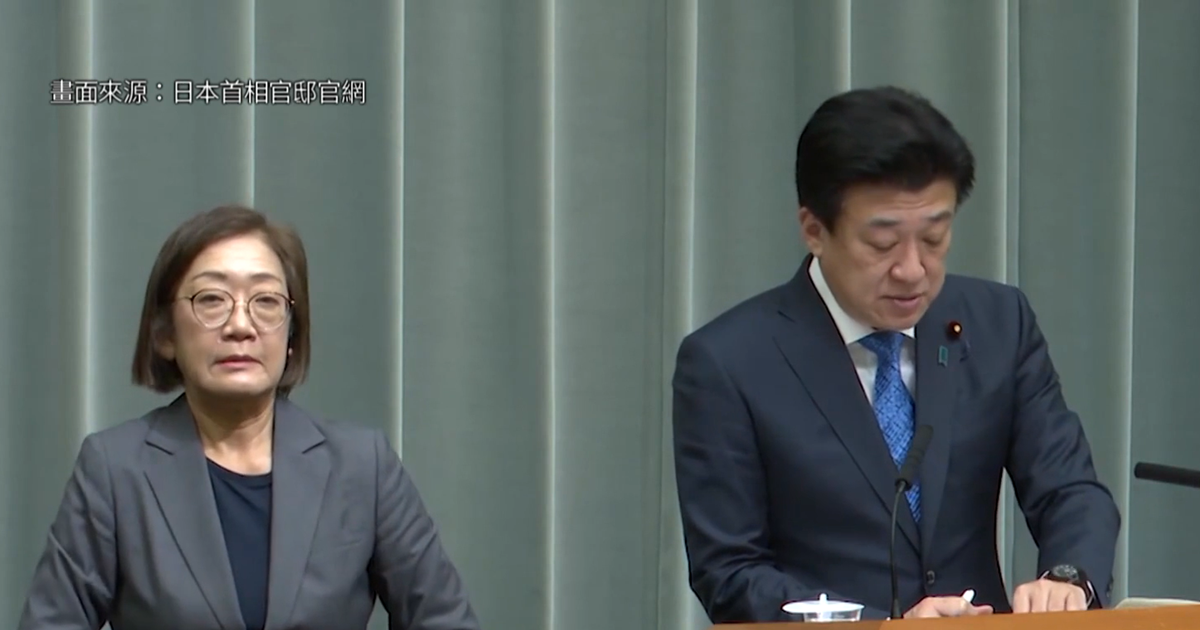

OpenAI revealed that Chinese officials used ChatGPT to document and facilitate large-scale cross-border intimidation and disinformation campaigns, including impersonating U.S. officials to threaten dissidents, fabricating false death notices, and attempting to smear Japan's Prime Minister. These AI-enabled actions resulted in real-world harm, violating human rights and spreading misinformation globally.[AI generated]

)